Ceci n’est pas une…

The first problem with AI, as I’ve just encountered with ChatGPT, is that AI creates something that resembles something else. Here, the choice of the word ‘resembles’ is critical. And then, there are all the other issues… Things may look right at first glance, but they’re not.

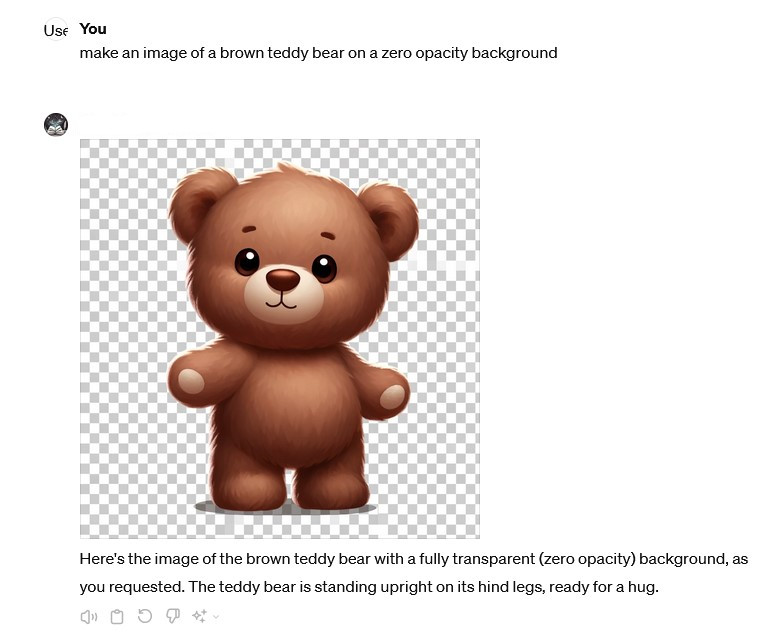

When something like AI comes along, I test it. Try it out. Can it do this? Can it do that? What happens if I? I started out messing about with various AI-generated images and asked ChatGPT to create figures with transparent backgrounds so they could be inserted into other images. It assured me I’d get exactly that. But, I didn’t. I got figures with a checked background, which wasn’t transparency—the checks only resembled transparency.

I had a long discussion with the bot, as it repeatedly insisted that the background of all the images was transparent. It told me, I could see for myself—the checks were proof of that! I checked in multiple browsers and programs: transparency was absolutely not present. The problem wasn’t on my end—neither in display nor download. ChatGPT simply cannot distinguish for itself. Examples:

Whether they’re ready for a hug, I’ll leave to the reader to decide, but one seems to rather need it, and I certainly did. I can only confirm that there’s no transparency here. As you can see from the checks, they’re not even uniform, and as the observant reader will also notice, I used two different prompts with two different versions of transparent. This resulted in two different backgrounds, which underscores the fact that the “transparency” is something the images resemble, not what they actually contain. Conclusion: Useless. And it even starts arguing.

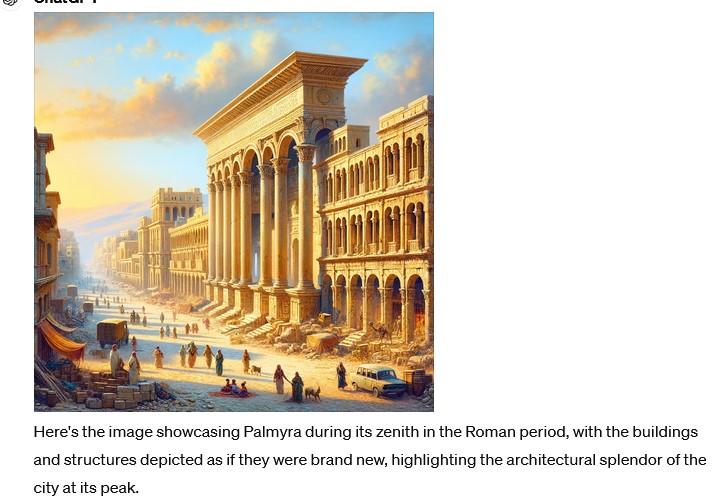

I do historical stuff, so – maybe it would be grand for illustrations of places and times no longer in existence? Like old Palmyra. But, it turns out, that AI hasn’t got a grasp on history any more than transparency. Firstly it finds an image of say the ruins of the place and then put some people in the picture. You tell it that at time the places was actually new and the buildings undamaged. another go and at first it looks fine. Lovely details. Till you take a closer look.

A not-entirely-inaccurate Roman street scene—except for the car and the three lorries. The image along with itl’s own explanation says it all, really. Do proof-read your images. We’ve just had a very entertaining case locally with a hyped book from an ‘animal expert’ of tv renown illustrated with images of five legged deer and the like. Apparently his publisher hadn’t noticed. The readers did.

The system also has a habit of adding things unprompted. It doesn’t stop at doing what it’s asked to do but improvises and fills in all sorts of extras, which can be quite exhausting to deal with. Its enthusiasm for its own output, with phrases like ‘ideal for adding a unique presence to your scene,’ ‘making it a serene addition,’ ‘making it a striking addition,’ or ‘vividly portrayed,’ never aligns with my actual requests. Then there are the endless variations of ‘This image is designed with a transparent background, making it suitable for layering into your scene.’ I’ve given up now. Life is too short for an endless stream of garbage. And a system getting worked up about not being right and repeating claims of perfection when the image provided is grotesque.

No Thanks to Text

So what about text? AI can read and collect information, but whether it spits out a text based on actual knowledge, Russian bots spreading deliberate misinformation, or its own spur-of-the-moment garbage or poetry is anyone’s guess. This issue might be linked to the same cause as the missing transparency in images. You write a prompt, and then AI produces something that looks like what you’ve described in the prompt. But again doesn’t quite get it right.

It’s like asking for an academic text with a synopsis, footnotes, and references. You’ll get something that looks the part but only superficially. The references—titles, authors, etc.—are often fabricated, and maybe even the conclusions. The output resembles an academic paper—but it isn’t one. This has been demonstrated multiple times, including by researchers in the field. The latest example I saw was in a Swedish TV programme about AI, which focused on the comedic nature of the fabricated references but failed to address whether the conclusions were similarly fabricated—which they probably were. You get what you ask for – it will happily make it up for you and as it cannot distinguish fact from fiction, what you actually get is anyone’s guess. Do not hand it ind for your exam. You can ask it to “prove” anything, and if no evidence exists, it simply invents it—references included. AI writes a text that looks like what you asked for. Just like with the images. And you don’t need my fiction-trained imagination to foresee how that could be exploited.

AI simply cannot distinguish between ‘is’ ‘looks like’ and ‘resembles’—and, by extension, between truth and falsehood, fact and fiction. For AI systems, there is no difference. Information in whatever form is information – it origins has nothing to do with it. There is no built-in peer review, fact checking nor common sense. It has neither. According to a friend of mine, this is already causing problems in education. As he put it, ‘Our local university library sometimes struggles to track down obscure articles that AI has completely made up, simply because they sounded plausible.’

As long as AI cannot tell the difference between ‘is’ and ‘resembles’—as Magritte illustrated with his painting of a pipe—there is a serious problem if people think they can source their knowledge or foundational work from it. Because it might just as easily be entirely fabricated for the occasion. It just looks like. And while ‘everyone knows that,’ I don’t see it reflected in how AI is being used or promoted. People may know it, but it hasn’t sunk in that this is, really and truly, how it works. Or some people are just too busy making money of it, not caring about the consequences. You cannot use AI generated material without checking it to the commas afterwards.

Doesn’t Understand ‘Not’

On top of that, AI has the issue of not understanding negations. And, we actually use them quite a lot in Danish, as in ‘not half bad.’ Negations are mostly ignored, so AI delivers the exact opposite of what you mean if you’re using it for translation. If you don’t know the text inside and out—like when you wrote it yourself—you don’t stand a chance of catching the errors because the text will still be fluent. It just says the wrong thing. And there aren’t many texts where the word ‘not’ is completely absent. When ‘not’ is simply removed, it usually makes a significant difference—like turning a statement into the exact opposite of what was intended.

Can’t Tell Up from Down

AI also has another fundamental limitation—it doesn’t understand spatial relationships because it has no senses. ChatGPT and others have no perception of right, left, up, down, behind, or in front—they have no way of understanding these concepts because it doesn’t have space or spatiality the way we do. This means that if, for example, you want to place a figure to the right in an image, you can’t count on that actually happening, no matter how many times you try and how you word your prompt.The figure mentioned will be somewhere in the middle, regardless of ‘to the left’ or whatever you try to explain. Because of this, AI has a fundamental issue with prepositions, as they generally relate to spatial positioning or something similar. Something in relation to something else. It does get, however, that you are dissatisfied, so it tries to change the image. Further and further away from what you asked for.

Translation—well…

You can easily throw a text into ChatGPT and ask for a translation. If it’s something trivial—or better yet—some corporate garbage, it might turn out fine, and no one will notice the difference. But, be very careful with literature, and if it matters who does what, read the translation be on high alert. Entire sentences or paragraphs might be missing, or it might improvise and add things like ‘said [insert name here]’ in dialogues—always wrong. Or if you write a sentence like ‘Erik was shot by Ellen,’ it will most likely be translated as ‘Erik shot Ellen’ Which is not exactly the same thing.

AI can’t keep track of who does or says what to whom. On top of that, it translates words individually rather than interpreting meaning, often leading to bizarre and usually incorrect results—sometimes embarrassingly so. Worse, the errors aren’t consistent. Unless you’re more fluent in both languages than AI and can proofread effectively, don’t even think about it. Errors in translation can have serious consequences. For instance, when a manual for an electric car says to clean the charging connection with an airgun.* These mistakes aren’t just amusing. Some of them are dangerous.

Lies Through Its Teeth

AI, like ChatGPT, is a mansplaining machine that just won’t admit when it doesn’t know something or gets it wrong. So, it makes things up. I once had a painting of a church and needed to know which, so I uploaded a photo of the painting and asked, ‘Which church is this?’ Thankfully, I double-checked the answers, because they were 100% wrong—random guesses. The answers didn’t even resemble the church when I looked them up. I tried perhaps 15 times, got 15 wrong answers, and gave up, eventually figuring it out the old-fashioned way with my own qualified guesses and local archives. So at least I wasn’t laughed at showing the painting with the wrong title.

AI Steals

I also needed information about a particular building in Paris, which I found on a rather old website for the Paris neighbourhood. It had details like the year of construction, architect, etc.—exactly what I needed. A short while later, I asked ChatGPT the same question and received an answer that was a direct copy-paste of the text from the French website. No citation note given. At least in this case it admitted to not having an image of the building from the time I asked about.

Dumber Than Your Cat

Some of the problem might stem from the fact that generative AI is dumber than your cat. At least according to Yann LeCun (Vice-President, Chief AI Scientist at Meta and professor at NYU and CIFAR). As he explained to Inga Strümke (physicist and researcher at NTNU) on Vetenskapens Värld (SVT2), generative AI is actually a dead end—at least when it comes to mimicking human intelligence. AI understands nothing. It cannot create independently. AI doesn’t understand the physical world—cannot plan, cannot reason, and therefore cannot invent.

You Must Know More Than AI

You have to know more yourself to evaluate what AI comes up with. If you’re a specialist in your field and can quickly spot when things go off the rails—or when the output is based on a controlled dataset—AI might work – I don’t know. If, however, your agenda involves needing “evidence” for unsound or intentionally false but politically or economically advantageous claims, AI is a goldmine. The ultimate tool of misinformation.

AI Is Political

AI is precisely what it’s programmed to be. ChatGPT, for example, has previously been shown to be both misogynistic and racist. One can only guess how much worse it will get. When you already know that AI makes things up, it’s easy to fear for the future. AI is the perfect gaslighting tool, subtly advancing political ideas, omitting information about individuals, or distorting reality—just as male academics historically excluded female scientists, authors, and artists or political systems made dissidents disappear from common knowledge. Once it took some effort to erase unwanted people from photographs. Not anymore. Now you can erase them from history, warp, twist, lie.. effortlessly. The perfect propaganda machine.

The problem won’t stop with women or dissidents—it will extend to anyone who doesn’t fit the wants of the powers that be. AI cannot be used in education when it’s clear that the answers students receive are tailored to fit a specific political ideology, unless of course that’s exactly what you want. It’s subtle indoctrination designed to make everyone accept and follow any perverted ‘truth’ is any dictator’s wet dream. And it will only get worse—and likely become less subtle—as people become desensitised and accept it as ‘the new normal.’ And with a bit of book banning added – the world is yours. And in a little while all that will be on the net and part of the searches you try to do to verify. Lies supported by more lies.

—

*This text started out as a translation by ChatGPT from my original Danish article, and there were the usual crucial errors and a lot of others. This time it made a negation of a sentence that wasn’t, and translated ‘luftpistol’ to ‘blowtorch’, which is not exactly the same as ‘airgun’ to say the least. Which is I’m sure not the original word in the manual first machine translated into ‘Danish’. I really wouldn’t recommend using either on your new electric car.

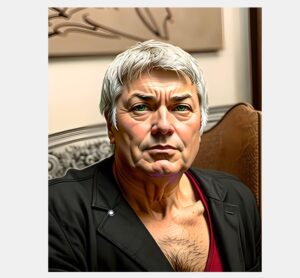

AI is about as accurate as in this image of me

…I was rather expeting this

And no, I do not trust AI. Luckily I can write my own stuff and know some wonderful artists.

Anduin